Social media platforms aren't just showing you content you might like. Large social platforms use engagement-prolonging interface designs that can pressure, entice, trap, and lull teens into spending more time online than they ever intended. The gap between "just checking my feed" and two hours later is not an accident. It is the product of deliberate design choices layered on top of machine learning systems that get smarter about holding your attention every single time you scroll. This guide breaks down exactly how that manipulation works, what the research actually shows, and what genuine agency looks like in 2026.

Table of Contents

- The hidden tactics: Engagement-prolonging designs and interface traps

- Personalization and feedback loops: How algorithms learn and reinforce

- Manipulation beyond entertainment: Political, social, and emotional consequences

- Can algorithmic literacy help? The limits and paradoxes of awareness

- The uncomfortable truth: What most guides get wrong about algorithmic manipulation

- Take back your feed: Try a platform designed for authenticity

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Algorithms exploit attention | Platforms use engineered design and feeds to keep you engaged far longer than intended. |

| Reinforcement is rapid | Algorithmic feedback can quickly amplify your interests, narrowing content diversity within days. |

| Manipulation is broader than you think | Algorithmic feeds can shape not just tastes but opinions and even mental wellbeing risks. |

| Knowledge alone isn’t enough | Being savvy about how platforms work doesn’t always translate into better habits or escape from manipulation. |

| Real agency is possible | Choosing platforms that avoid manipulative algorithms is the most effective way to regain control of your online life. |

The hidden tactics: Engagement-prolonging designs and interface traps

Now that you understand the stakes, let's break down exactly how platforms engineer experiences to keep you engaged. The manipulation is not random. It follows a recognizable playbook built around four core strategies.

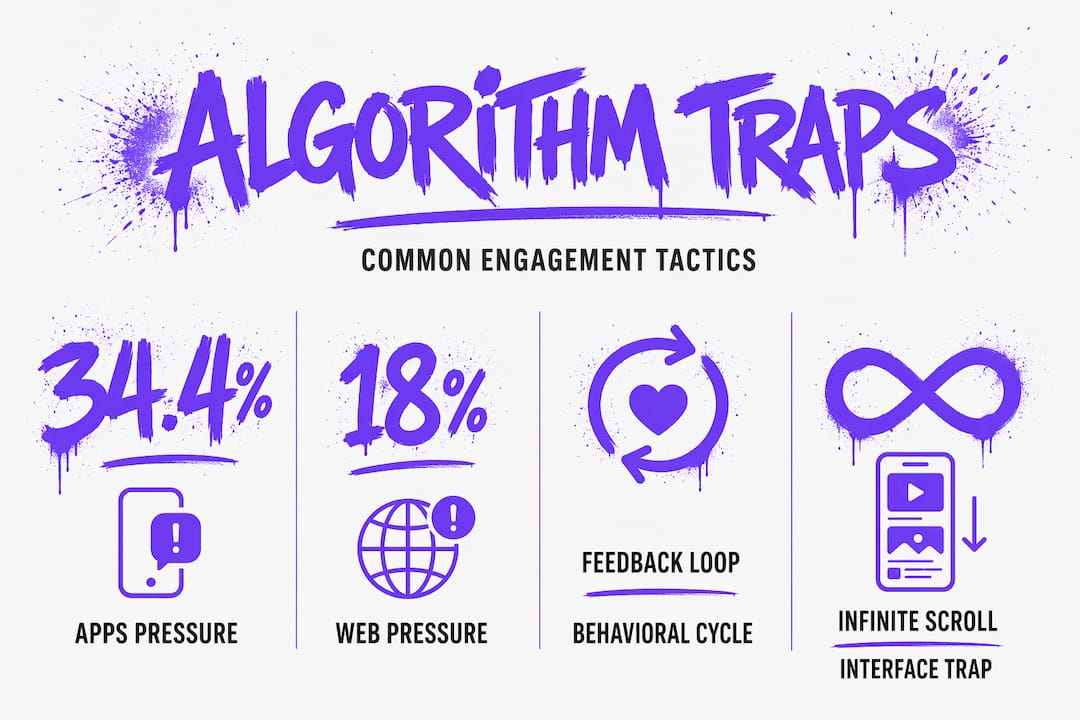

The four engagement strategies, defined:

- Pressuring involves urgency signals like notification badges, streak counters, and "X people liked your post" alerts that push you to respond immediately. This is the most common tactic in apps, appearing in 34.4% of app-based designs studied in recent audits.

- Enticing uses reward mechanics like likes, comments, and curated "For You" feeds to make the next piece of content feel irresistible. The unpredictability of what reward comes next is borrowed directly from behavioral psychology.

- Trapping refers to design patterns that make it difficult to leave. Infinite scroll removes natural stopping points. Autoplay removes the need to make an active choice. These are not conveniences. They are engineered friction in reverse.

- Lulling is subtler. It involves pacing content in a way that keeps your nervous system calm and your guard down, so you stay longer without realizing it.

Here is how these tactics compare across apps and websites:

| Tactic | Prevalence in apps | Prevalence in websites | Primary mechanism |

|---|---|---|---|

| Pressuring | 34.4% | 18.2% | Notifications, streaks, urgency cues |

| Enticing | 28.7% | 22.5% | Reward feeds, curated content |

| Trapping | 24.1% | 19.8% | Infinite scroll, autoplay |

| Lulling | 12.8% | 9.5% | Paced content, ambient sound |

Apps are significantly more aggressive than websites across every category. That matters because most Gen Z and Millennial users access social media primarily through mobile apps, meaning they are exposed to the highest concentration of these tactics daily.

What makes this especially relevant is how these UX patterns feed the algorithm itself. Every time you respond to a pressure cue, click an enticing recommendation, or stay on the platform because leaving feels like effort, you are generating an engagement signal. The algorithm reads that signal as proof that this type of content, at this time, in this format, works for you. It files that away and doubles down.

Understanding mental wellness and design means recognizing that the interface is not neutral. It is a feedback mechanism that trains the algorithm while simultaneously training your behavior. You can also explore platforms without manipulative design to see what a different approach actually looks like in practice.

Pro Tip: When you notice infinite scroll, streaks, or urgency notifications on any platform, treat them as warning signs that the design is working against your autonomy. These are not features. They are retention tools.

Personalization and feedback loops: How algorithms learn and reinforce

Once platforms hook you with sticky design, here's what happens next: algorithms quickly mold your experience to what you've signaled so far. The speed of this process surprises most people.

Here is how the reinforcement cycle works, step by step:

- You signal an interest. You pause on a video about a specific topic, watch it to the end, or interact with it in any way. That pause is data.

- The algorithm amplifies. Within a short window of continued use, the platform begins serving more content in that category. It is not waiting for confirmation. It is acting on early signals immediately.

- Your engagement increases. Because the content now matches your demonstrated interest, you naturally engage more. This looks like success to the algorithm.

- Diversity drops. As your feed becomes more precisely targeted, content from outside your established interests appears less often. Hashtag exploration falls. New topics surface less frequently.

- The bubble solidifies. Breaking out requires deliberate, sustained effort because every interaction you make reinforces the existing pattern.

A sock-puppet audit, a research method where automated accounts simulate user behavior to measure algorithmic response, found that rapid amplification of interest-aligned content typically occurs within the first 200 videos, while content diversity and hashtag exploration fall measurably. Two hundred videos sounds like a lot until you realize that is roughly two to three hours of use on a typical short-form video platform.

The critical insight here is that platforms don't just rank posts. They reinforce what you interact with, training on your own curiosity and habits. The algorithm is not reading your mind. It is reading your behavior and then shaping the environment to produce more of that behavior.

| Metric | Early feed (first 50 videos) | Established feed (after 200 videos) |

|---|---|---|

| Interest-aligned content | ~40% | ~78% |

| Content diversity index | High | Significantly reduced |

| Hashtag variety | Broad | Narrowed to 3-5 clusters |

| Cross-topic recommendations | Frequent | Rare |

This trade-off is the core tension of algorithmic personalization. Your feed gets better at giving you what you've already shown interest in, but it gets worse at showing you what you don't yet know you might care about. That erosion of discovery is not a bug. It is a consequence of optimizing for engagement over exploration.

You can learn more about how feedback loops and recommendation systems work, and why some platforms are now rethinking this entirely. For families navigating youth content recommendation, the stakes are even higher. Exploring algorithm-free features is one concrete way to see what a different model looks like.

Manipulation beyond entertainment: Political, social, and emotional consequences

All this isn't just theory or entertainment. The consequences can be deeply personal, societal, and even political. The research is clear and, in some cases, striking.

A randomized study found that switching to algorithmic feed shifted users' political attitudes and increased engagement. When the same users were placed on a chronological feed, the attitudinal shift disappeared. That is a direct, measurable link between feed ranking and political belief. Not opinion. Belief.

For adolescents and young adults, the risks extend well beyond politics. Algorithmic personalization can affect attention, engagement, and mental wellbeing risk, especially in youth, according to the U.S. Surgeon General's advisory on youth mental health and social media. This is not a fringe concern. It is a public health framing.

The most studied consequences for Gen Z and Millennials include:

- Negative peer comparison. Algorithms prioritize high-performing content, which means users are disproportionately exposed to idealized versions of other people's lives, bodies, and achievements.

- Radicalization pathways. Incremental content escalation, where each video is slightly more extreme than the last, has been documented across multiple platforms and topic areas.

- Anxiety and compulsive checking. The pressure tactics described earlier create a low-grade urgency that can persist even when users are not actively on the platform.

- Reduced attention span. Constant optimization for short bursts of high-engagement content trains users to expect rapid stimulation, making sustained focus harder over time.

- Echo chambers and polarization. As content diversity falls, exposure to different perspectives shrinks, reinforcing existing beliefs and reducing tolerance for complexity.

These are not abstract risks. They are patterns that researchers, clinicians, and public health officials have documented with increasing specificity. Reviewing online safety guidance is a practical starting point, and understanding alternatives to algorithmic feeds is a logical next step for anyone who wants to act on this information.

Can algorithmic literacy help? The limits and paradoxes of awareness

Some argue that just being "smart" about algorithms can protect you. Let's see what the evidence and lived experience actually show.

The honest answer is: awareness helps, but not as much as most people hope. Greater algorithmic knowledge among young adults can increase concern about misinformation, but may not lead to corrective or cross-perspective engagement. In other words, knowing more about how feeds work can make you more worried without making you more likely to actually change your behavior or seek out different viewpoints.

This is the algorithmic literacy paradox. The more you understand the system, the more you may disengage from it emotionally, but disengagement is not the same as taking action. Some users become cynical rather than empowered, scrolling with a sense of resignation rather than genuine agency.

Here is what algorithmic literacy actually does and does not do:

- It does increase awareness. Users who understand how feeds work are more likely to recognize when they are being manipulated.

- It does not automatically change behavior. Recognition and action are separate cognitive steps, and the gap between them is where most people get stuck.

- It can increase anxiety. Knowing the system is working against you without having a clear alternative can feel disempowering rather than liberating.

- It does support better platform choices. Users with higher algorithmic literacy are more likely to seek out algorithm-free apps and non-manipulative environments when they know those options exist.

- It works best when paired with structural alternatives. Literacy plus access to different platforms produces better outcomes than literacy alone.

Pro Tip: Instead of trying to outsmart the algorithm, try shifting your frame entirely. Ask not "how do I resist this?" but "does this platform's incentive structure align with what I actually want from social media?" That question leads to clearer, more actionable decisions.

The uncomfortable truth: What most guides get wrong about algorithmic manipulation

Let's look beyond the usual "just be informed" advice and face the real dilemma most young people encounter every day. Most articles on this topic end with a list of tips: turn off notifications, use a timer, follow diverse accounts. These are not wrong. But they treat the symptom, not the cause.

The deeper problem is that conventional digital literacy advice is built on the assumption that users can out-think systems designed by teams of engineers with access to behavioral data at a scale no individual can match. That assumption is flattering but false. You are not failing because you lack willpower or knowledge. You are navigating an environment that was built, from the ground up, to override both.

Many users become more cynical as they learn more, and cynicism is not agency. It is a form of learned helplessness dressed up as sophistication. Scrolling knowingly through a manipulative feed is still scrolling through a manipulative feed.

True agency looks different. It means choosing platforms whose incentive structures do not depend on maximizing your time-on-site. It means prioritizing spaces where connection is the product, not the mechanism for selling attention to advertisers. It means recognizing that the question is not just "how do I use this platform better?" but "does this platform deserve my presence at all?"

The deep dives on manipulation available from researchers and advocates are worth reading, not to become more anxious, but to become more deliberate. The goal is not to opt out of digital life. It is to opt into digital environments that treat you as a person rather than a data point.

Non-algorithmic and human-curated experiences are not nostalgic fantasies. They are a design philosophy. And they are increasingly available to anyone willing to look for them.

Take back your feed: Try a platform designed for authenticity

Armed with new clarity, here's how you can put this knowledge into practice and reclaim your online life.

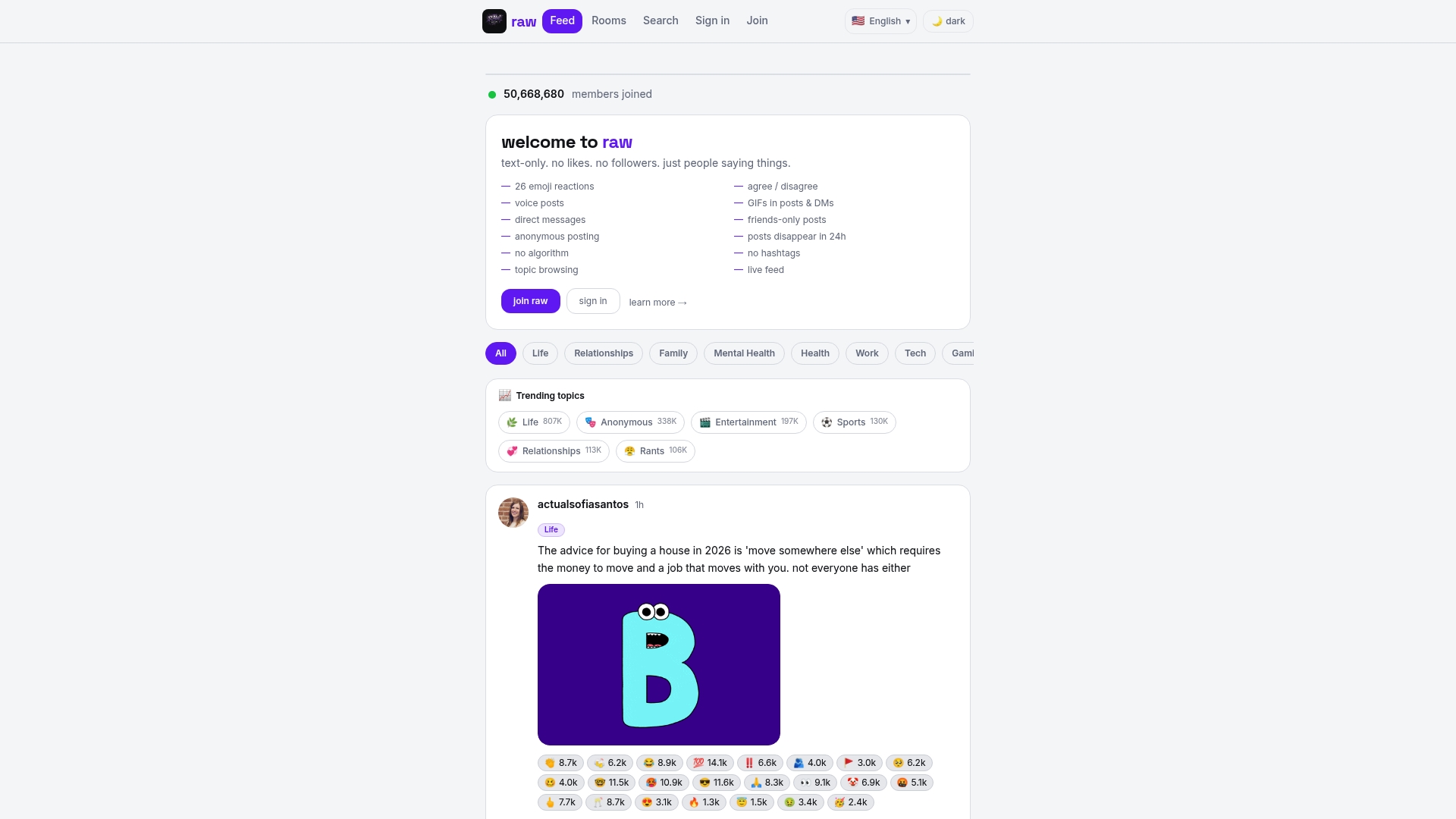

RAW Social was built on a simple premise: authentic connection should not require performing for an algorithm. There are no likes, no follower counts, no engagement metrics, and no recommendation engine deciding what you see next. What you get instead is a space where conversations happen because people want to have them, not because an interface pressured them into it.

If the research in this article resonated with you, the practical next step is to explore what a platform looks like when its features with no algorithms are the foundation rather than the exception. RAW Social's wellness-focused platform approach means the design itself is not working against your wellbeing. For anyone ready to experience social media as a genuine alternative for authentic connection, RAW Social is worth exploring. Not as a hard sell. As a logical conclusion to everything this article has laid out.

Frequently asked questions

What are the main signs an algorithm is manipulating my feed?

Look for endless scrolling, pressure to interact, reduced post diversity, or content that feels increasingly tailored to hold your attention. Large social platforms use engagement-prolonging interface designs specifically to produce these effects.

Does using a non-algorithmic feed actually make a difference?

Yes. Switching from algorithmic to chronological feed eliminated attitudinal shifts in users, confirming that algorithmic ranking, not the content itself, drives the manipulation effect.

Can better algorithm understanding protect me from manipulation?

Greater knowledge increases awareness but often does not lead to more corrective action. Greater algorithmic knowledge may not improve cross-perspective engagement or reduce susceptibility to misinformation.

How quickly can an algorithmic feed reinforce my interests?

Very quickly. Rapid reinforcement typically occurs within the first 200 videos watched, which can represent just a few hours of use on short-form video platforms.

What's the main risk to mental health from algorithmic feeds?

The primary risks include negative peer comparison, compulsive checking behaviors, and exposure to escalating content. Algorithmic personalization affects mental wellbeing risk most acutely in youth and adolescents, according to the U.S. Surgeon General.